주어진 control point로 만들어지는 Bezier 곡선을 계산할 때 De Casteljas 알고리즘을 이용하여 계산한다. 이 경우 control points을 백업하는 과정이 필요하다. 여기서는 직접적으로 Bernstein 함수를 계산하여 Bezier 곡선을 구하자. 우선 $n$차 Bezier 곡선을 Bernstein 다항식을 써서 표현하면

$$ {\bf B}(t) = \sum _{k=0}^n b_{k, n}(t) {\bf Q}_k \\ b_{k, n}(t) = \begin{pmatrix} n \\k \end{pmatrix} t^k (1-t)^{n-k}$$

이므로 이항계수의 계산이 요구된다.

$$ \begin{pmatrix} n \\k \end{pmatrix} = \frac{ n!}{k! (n-k)!} $$

이항계수는 미리 계산된 lookup table을 이용하는 경우가 더 편리할 수 있다: https://kipl.tistory.com/575

// n = degree of a Bezier curve;

double Bezier(int n, double Q[], double t) {

double b = 0;

double tk = 1;

double onetk = pow(1-t, double(n));

for (int k = 0; k <= n; k++) {

double blend = tk * onetk; // bernstein polynomial;

// for the next stage;

tk *= t;

onetk /= (1-t);

// multiply binomial coefficient;

int nf = n; // n! 계산;

int kf = k; // k! 계산;

int nkf = n-k; // (n-k)! 계산;

while (nf >= 1) {

blend *= nf; // multiply by n!

nf--;

if (kf > 1) { // divide by k!

blend /= kf;

kf--;

}

if (nkf > 1) { // divide by (n-k)!

blend /= nkf;

nkf--;

}

}

b += Q[k] * blend;

}

return b;

}'Computational Geometry' 카테고리의 다른 글

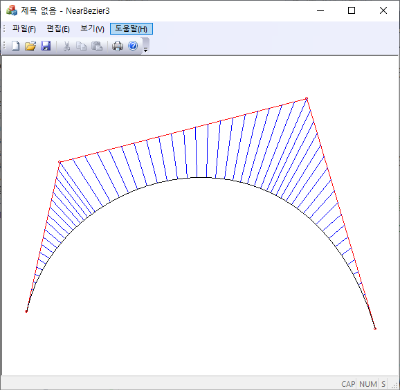

| Subdividing a Bezier Curve (0) | 2024.04.18 |

|---|---|

| Derivatives of Bezier Curves (1) | 2024.04.12 |

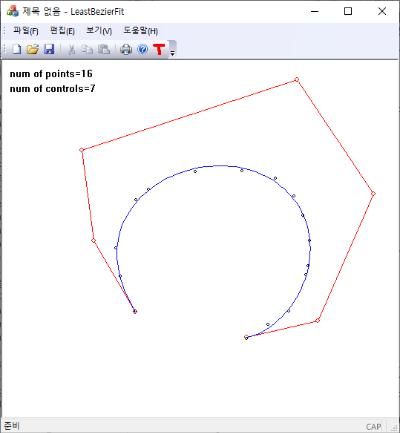

| Least Squares Bezier Fit (0) | 2024.04.05 |

| Why Cubic Splines? (9) | 2024.03.16 |

| Natural Cubic Spline (0) | 2024.03.11 |